Nvidia reported a monumental fiscal fourth quarter, far exceeding analyst expectations with robust revenue growth driven overwhelmingly by its data center segment, signaling the company’s deepening entrenchment as the primary architect of the global artificial intelligence infrastructure. The stellar performance, announced on Wednesday, January 6, 2026, saw the company’s stock surge by as much as 2% in extended trading, reflecting investor confidence in its sustained leadership in the burgeoning AI market. This exceptional quarter reinforces Nvidia’s pivotal role in the ongoing technological revolution, setting a high bar for the industry and underscoring the insatiable demand for its specialized AI processing units.

A Quarter of Unprecedented Growth: Financial Highlights

Nvidia’s fiscal fourth-quarter results for 2026 painted a picture of extraordinary expansion. The company’s total revenue for the quarter climbed an astonishing 73% year-over-year, reaching $67.9 billion, a significant leap from $39.3 billion reported in the same period a year prior. This figure comfortably surpassed the $65.8 billion consensus estimate from analysts polled by LSEG, showcasing the remarkable acceleration of Nvidia’s business.

The financial bedrock of this growth was, unequivocally, the data center unit. This segment, which houses Nvidia’s market-leading artificial intelligence chips, recorded a staggering 75% revenue growth, reaching an unprecedented $62.3 billion. This performance not only exceeded StreetAccount’s estimate of $60.69 billion but also cemented the data center’s dominance, now accounting for over 91% of Nvidia’s total sales. The sheer scale of this segment highlights how deeply integrated Nvidia’s GPUs have become in powering the world’s most advanced AI computations.

Profitability followed suit, with net income almost doubling to $43 billion, or $1.76 per share, compared to $22.1 billion, or 89 cents per share, in the corresponding quarter of the previous year. This dramatic increase in earnings per share demonstrates the company’s operational efficiency and pricing power in a high-demand market.

The Indispensable Engine: Data Centers Drive AI Revolution

Nvidia’s data center business has become the veritable engine of the artificial intelligence revolution, providing the foundational hardware for training and deploying complex AI models across various industries. The company’s specialized Graphics Processing Units (GPUs), particularly its Hopper and Grace Blackwell architectures, are considered indispensable for hyperscale cloud providers, enterprises, and research institutions pushing the boundaries of AI.

A significant portion of this data center success is attributed to the "hyperscalers" – the world’s largest cloud computing providers: Alphabet (Google Cloud), Amazon (AWS), Meta (Facebook), and Microsoft (Azure). These tech giants, having reported their own quarterly results just weeks prior, provided Wall Street with a clear preview of Nvidia’s trajectory. Their ambitious capital expenditure (capex) forecasts for the year, driven primarily by the need to build out vast AI infrastructure, are projected to collectively approach $700 billion. This colossal investment directly translates into surging demand for Nvidia’s AI accelerators, as these companies race to offer leading-edge AI capabilities to their vast client bases. In her CFO commentary, Colette Kress confirmed that hyperscalers "remained our largest customer category," collectively accounting for just over 50% of data center revenue.

Beyond the raw processing power of its GPUs, Nvidia’s networking solutions are proving equally crucial. Within the data center business, sales of the company’s networking parts soared by an astounding 263% year-over-year, reaching $10.98 billion. These components are vital for interconnecting hundreds, if not thousands, of GPUs into cohesive, high-performance computing clusters. This remarkable growth reflects the strong adoption of Nvidia’s NVLink networking technology, which enables ultra-fast communication between GPUs, and its Spectrum-X Ethernet switches. The company recently secured new deals with industry behemoths like Meta, further solidifying its position in the high-speed interconnect market essential for massive AI workloads. The synergy between Nvidia’s processing units and its networking infrastructure creates a powerful, integrated ecosystem that competitors find challenging to replicate.

Navigating Supply Chains and Strategic Expansion

The unprecedented demand for Nvidia’s AI chips has put immense pressure on its global supply chain. To mitigate risks and ensure continued capacity, Nvidia is strategically expanding its manufacturing footprint beyond its traditional concentration in Asia, venturing into the United States and Latin America. This diversification is a proactive measure to build resilience and redundancy into its operations.

A notable development in this strategy is the production of Blackwell GPUs at Taiwan Semiconductor Manufacturing Co.’s (TSMC) new chip fabrication plants in Arizona. This move signifies a critical step towards localizing advanced chip manufacturing in the U.S. Furthermore, some of Nvidia’s rack-scale systems are now being assembled at a large, state-of-the-art Foxconn plant in Mexico. These strategic geographical shifts are designed to address potential geopolitical risks, reduce lead times, and enhance the overall robustness of its supply chain.

In its financial filing, Nvidia articulated the rationale behind these efforts: "These moves are expected to strengthen our supply chain, add resiliency and redundancy, and meet the growing demand for AI infrastructure." The company, however, also acknowledged the inherent challenges, stating, "Our ability to increase manufacturing capabilities will depend on the local region’s manufacturing ecosystem’s capacity to ramp production supply to the required volume and on a timely basis." This nuanced perspective reflects the complex realities of establishing advanced manufacturing capabilities in new regions.

A Glimpse into the Future: Vera Rubin and Next-Gen AI

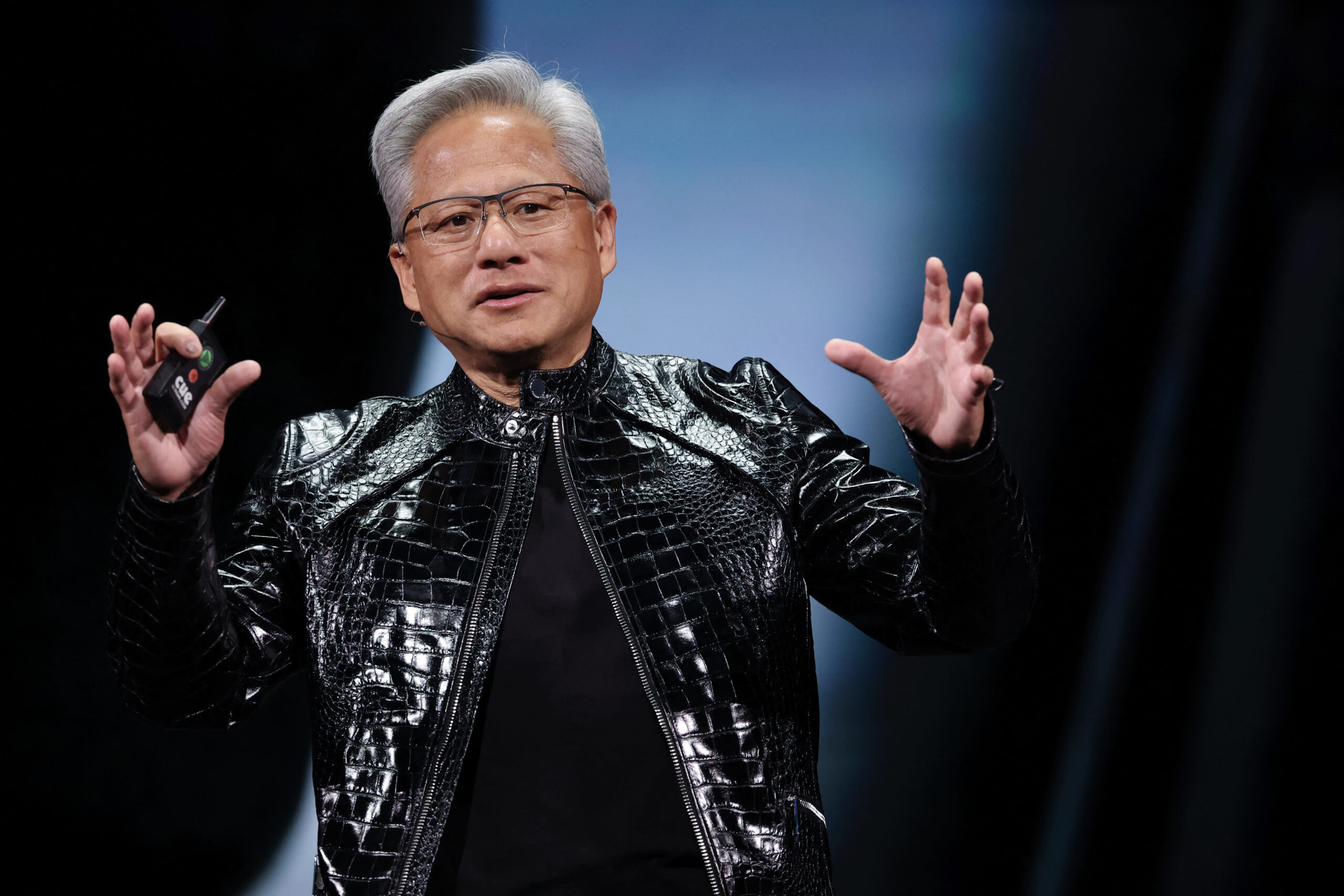

The excitement surrounding Nvidia’s future roadmap is palpable, particularly with the imminent release of its next-generation Vera Rubin rack-scale systems. Unveiled at the annual Consumer Electronics Show (CES) in Las Vegas on January 6, 2026, by CEO Jensen Huang, the Vera Rubin platform is poised to be the successor to the highly successful Grace Blackwell architecture.

This new system is not just an incremental upgrade; it is expected to deliver a groundbreaking 10 times more performance per watt than its predecessors. This leap in energy efficiency is critically important at a time when data centers globally are grappling with major power constraints and escalating operational costs. By dramatically improving the performance-to-power ratio, Vera Rubin aims to enable even larger and more complex AI models to be trained and deployed more sustainably.

Nvidia confirmed that it had "shipped our first Vera Rubin samples to customers earlier this week," with production shipments slated to commence in the second half of 2026. This aggressive timeline underscores Nvidia’s commitment to maintaining its technological edge and continuously innovating to meet the escalating demands of the AI industry. The Vera Rubin platform is anticipated to further solidify Nvidia’s leadership, offering an unparalleled combination of raw computational power and environmental efficiency.

Challenges and Diversification: Gaming, Automotive, and Professional Visualization

While the data center business continues its meteoric rise, other segments of Nvidia’s diverse portfolio presented a more mixed picture. The gaming unit, historically Nvidia’s largest revenue driver, recorded respectable growth of 47% from a year ago to $3.7 billion. However, it experienced a 13% sequential decline from the previous quarter. This dip is partly attributed to broader market dynamics but also hints at strategic prioritization within Nvidia.

Analysts have speculated that Nvidia may defer the launch of a new gaming GPU this year, a decision potentially influenced by ongoing memory constraints. The global shortage of High Bandwidth Memory (HBM), a crucial component for advanced AI accelerators, is compelling chipmakers to prioritize its allocation towards high-margin AI processors. For Nvidia, this means channeling precious HBM resources towards rack-scale systems like the 72-GPU Grace Blackwell, which offer significantly higher revenue per unit. Finance chief Colette Kress explicitly stated in her commentary that the company expects supply constraints to be a headwind to Nvidia’s Gaming business "in the first quarter of fiscal 2027 and beyond," indicating a persistent challenge.

In the automotive sector, which encompasses chips for autonomous vehicles and robotics, Nvidia reported sales of $604 million for the quarter. While up 6% from a year earlier, this figure fell below analysts’ expectations of $654.8 million, according to StreetAccount. This slight miss suggests that while the long-term potential for AI in automotive remains strong, the ramp-up in adoption may be more gradual than initially projected.

Conversely, Nvidia’s professional visualization business delivered an exceptionally strong performance, with revenue surging by 159% year-over-year to $1.32 billion. This significantly exceeded expectations of $755.4 million, as per StreetAccount. This segment, which includes high-end GPUs for workstations used in design, content creation, and scientific visualization, is benefiting from increased demand for powerful computing solutions across various professional fields, often leveraging AI capabilities in their workflows.

Strategic Investments and Key Partnerships

Beyond its core product lines, Nvidia has been actively investing in the broader AI ecosystem, pouring substantial capital into large AI labs and other companies within the industry. The company disclosed in its annual filing that it invested an impressive $17.5 billion in private companies and infrastructure funds during the year, "primarily to support early-stage startups." These investments are strategic, aiming to foster innovation, expand the reach of AI technologies, and potentially secure future revenue streams by nurturing companies that will rely on Nvidia’s hardware. However, Nvidia also prudently noted the inherent risks, stating that these investments "may not become profitable in the near term, or at all, and there can be no assurance that we will realize a return on our investments."

A highly anticipated partnership remains in flux: the potential collaboration with OpenAI. CEO Jensen Huang addressed the situation directly on Wednesday’s analyst call, stating that Nvidia continues "to work with OpenAI toward a partnership agreement and believe we are close." The two companies had initially announced a $100 billion deal in September of the previous year, which promised to be a landmark agreement in the AI sector. However, the deal has yet to be finalized, as Nvidia noted in its quarterly filing that there is "no assurance that a transaction will be completed." A finalized partnership with OpenAI, a leading developer of generative AI technologies, would further cement Nvidia’s position at the forefront of AI development and deployment.

Market Reaction and Broader Implications

Nvidia’s exceptional performance has not gone unnoticed by the market. As of Wednesday’s close, the company’s shares were up a remarkable 5% year-to-date in 2026, significantly outperforming the broader Nasdaq index, which was down 0.4% over the same period. Among the exclusive "trillion-dollar club" of tech giants, only Apple showed gains, albeit less than 1%, highlighting Nvidia’s unique momentum. This sustained outperformance underscores investor confidence in Nvidia’s dominant market position and its ability to consistently deliver robust growth amidst the AI boom.

The implications of Nvidia’s continued ascent are profound for the entire technology landscape. Its indispensable role in AI development means that its financial health and technological advancements have ripple effects across cloud computing, enterprise software, and even national strategic capabilities. The company’s proactive measures in supply chain diversification reflect a growing awareness of geopolitical and logistical risks, aiming to build a more resilient global tech infrastructure. As AI continues to evolve at a breakneck pace, Nvidia’s innovative drive, exemplified by the upcoming Vera Rubin platform, ensures it remains not just a beneficiary but a primary driver of this transformative technological era.

— CNBC’s Salvador Rodriguez contributed to this report.