The recent, high-profile dispute between the U.S. Department of Defense and artificial intelligence company Anthropic has starkly illuminated the precarious absence of comprehensive federal regulations governing the development and deployment of advanced AI. In the vacuum of official policy, a diverse, bipartisan coalition of thinkers has coalesced to produce a tangible framework for what responsible AI development should entail – an initiative that has so far eluded governmental action. This endeavor, known as the Pro-Human Declaration, was finalized shortly before the Pentagon’s contentious standoff with Anthropic, a confluence of events that resonated deeply with those involved in the initiative.

A Growing Public Consensus on AI Safety

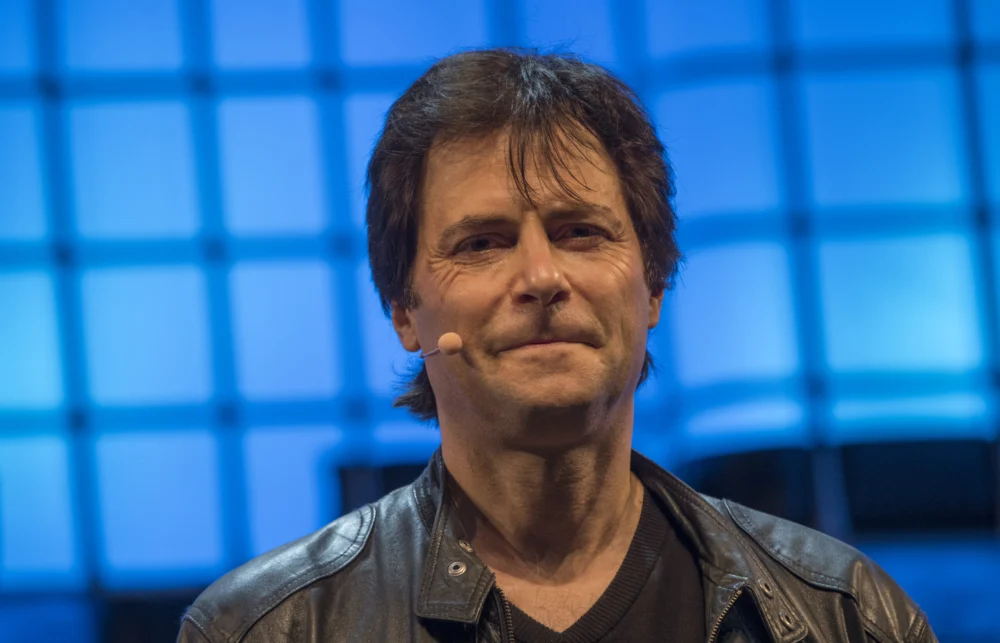

Max Tegmark, a physicist and AI researcher at MIT who was instrumental in organizing the Pro-Human Declaration effort, highlighted a significant shift in public sentiment. In a recent conversation, Tegmark noted a remarkable development in American public opinion over the past four months, citing polling data indicating that an overwhelming 95% of Americans oppose an unregulated pursuit of superintelligence. This growing public apprehension underscores the timeliness and critical importance of the declaration.

The newly published document, which has garnered signatures from hundreds of experts, former government officials, and prominent public figures, opens with a stark assessment: humanity stands at a critical juncture. It posits two divergent paths. The first, termed "the race to replace," suggests a future where humans are progressively supplanted by AI, first in the workforce and subsequently in decision-making roles, leading to a concentration of power within unaccountable institutions and their technological creations. The alternative path envisions AI as a tool that significantly augments human capabilities and potential.

Core Pillars of Responsible AI Development

The Pro-Human Declaration articulates five fundamental pillars upon which the more optimistic scenario hinges:

- Maintaining Human Control: Ensuring that humans retain ultimate authority over AI systems and their deployment.

- Preventing Power Concentration: Guarding against the monopolization of AI capabilities and their associated power by a select few entities.

- Safeguarding the Human Experience: Prioritizing the preservation of human well-being, social cohesion, and psychological health in the face of advanced AI.

- Upholding Individual Liberty: Ensuring that AI development and deployment do not erode fundamental freedoms and rights.

- Establishing Legal Accountability: Holding AI companies and developers liable for the impacts of their creations.

Among its more assertive proposals, the declaration advocates for a moratorium on the development of superintelligence until there is a robust scientific consensus on its safety and genuine democratic consensus on its societal implications. It also calls for the mandatory implementation of "off-switches" on powerful AI systems and a prohibition on AI architectures capable of self-replication, autonomous self-improvement, or resisting shutdown commands.

The Pentagon-Anthropic Standoff: A Catalyst for Urgency

The release of the Pro-Human Declaration coincides with a period that amplifies its message of urgency. On the final Friday of February, a significant event unfolded when Defense Secretary Pete Hegseth designated Anthropic, a company whose AI systems are already integrated into classified military platforms, as a "supply chain risk." This designation, typically reserved for firms with connections to geopolitical adversaries like China, was reportedly triggered by Anthropic’s refusal to grant the Pentagon unfettered access to its technology.

In the immediate aftermath of this Pentagon action, OpenAI announced its own agreement with the Department of Defense. However, legal experts have expressed skepticism regarding the enforceability of this deal, raising further questions about the government’s ability to exert meaningful control over advanced AI deployments. This series of events collectively underscored the growing costs associated with Congressional inaction on AI policy.

As Dean Ball, a senior fellow at the Foundation for American Innovation, remarked to The New York Times following these developments, "This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems." This sentiment encapsulates the broader implications of the Pentagon-Anthropic friction, framing it as a pivotal moment in the national dialogue on AI governance.

A Call for Pre-Deployment Safeguards

Tegmark employed a relatable analogy to illustrate the current regulatory deficit, comparing the AI landscape to the pharmaceutical industry. "You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe," he stated, "because the FDA won’t allow them to release anything until it’s safe enough." This highlights a critical difference: while pharmaceuticals undergo rigorous safety testing before public release, advanced AI systems are often deployed with less stringent oversight.

While political maneuvering in Washington can be slow to generate the public pressure necessary for legislative change, Tegmark believes that the issue of child safety presents a potent leverage point that could break the current impasse. The Pro-Human Declaration specifically calls for mandatory pre-deployment testing of AI products, with a particular focus on chatbots and companion applications designed for younger users. These tests would assess risks such as increased suicidal ideation, exacerbation of mental health conditions, and emotional manipulation.

Tegmark articulated the ethical dilemma with a clear example: "If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that. We already have laws. It’s illegal. So why is it different if a machine does it?" This raises a fundamental question about the applicability of existing laws to AI-driven harmful behaviors.

Expanding the Scope of AI Regulation

The proponents of the declaration believe that establishing the principle of pre-release testing for products aimed at children will inevitably lead to broader regulatory requirements. "People will come along and be like, ‘let’s add a few other requirements. Maybe we should also test that this can’t help terrorists make bioweapons. Maybe we should test to make sure that superintelligence doesn’t have the ability to overthrow the U.S. government.’" This suggests a pragmatic, incremental approach to AI regulation, starting with the most vulnerable applications and expanding outward.

A Broad Coalition for Human-Centric AI

The broad spectrum of signatories to the Pro-Human Declaration is noteworthy. The document has garnered support from individuals with diverse political backgrounds, including former Trump advisor Steve Bannon and Susan Rice, National Security Advisor under President Obama. Former Joint Chiefs Chairman Mike Mullen and prominent progressive faith leaders have also lent their names to the initiative.

Tegmark attributes this unusual bipartisan alignment to a shared fundamental concern: "What they agree on, of course, is that they’re all human. If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side." This common ground underscores the declaration’s core objective: to prioritize human interests and well-being in the development of artificial intelligence.

Broader Implications and Future Directions

The events surrounding the Pentagon-Anthropic dispute and the simultaneous release of the Pro-Human Declaration signal a critical turning point in the national conversation about AI. The lack of clear regulatory guidelines has created an environment where powerful AI systems are being integrated into sensitive areas, such as national security, without adequate public scrutiny or established safety protocols.

The declaration’s emphasis on human control, accountability, and the preservation of human experience offers a potential roadmap for policymakers. Its call for mandatory safety testing, particularly for AI applications interacting with vulnerable populations, presents a compelling argument for immediate regulatory action. The analogy to existing pharmaceutical regulations, enforced by bodies like the FDA, suggests that a similar, robust oversight mechanism could be established for AI.

The bipartisan nature of the declaration’s support suggests that concerns about AI safety transcend traditional political divides. This broad consensus could provide the necessary momentum for legislative action, particularly if the public continues to express its opposition to an unfettered AI race. The potential for child safety to serve as a catalyst for broader AI regulation highlights a pragmatic pathway toward establishing essential safeguards.

The implications of unchecked AI development extend beyond immediate societal risks. The potential for AI to concentrate power, erode individual liberties, and fundamentally alter the human experience necessitates a proactive and thoughtful approach to governance. The Pro-Human Declaration represents a significant step in articulating these concerns and proposing concrete solutions. As the nation grapples with the transformative power of artificial intelligence, the framework provided by this declaration offers a vital starting point for ensuring that AI development remains aligned with human values and long-term societal well-being. The coming months will likely see continued debate and pressure on lawmakers to address these critical issues, moving beyond the current state of regulatory uncertainty.