Google has initiated a strategic restructuring of the development team behind Project Mariner, its experimental artificial intelligence agent designed to navigate the Chrome browser and execute complex web-based tasks on behalf of users. In recent months, several key personnel within the Google Labs division who were instrumental in the research and development of the prototype have been reassigned to higher-priority initiatives, according to individuals familiar with the internal changes. While the specific projects receiving these resources were not named, the move signals a broader shift in how the search giant intends to deploy its "computer use" capabilities. A Google spokesperson confirmed the organizational changes, emphasizing that the foundational technology developed under the Project Mariner banner is not being abandoned but rather integrated into the company’s overarching AI agent strategy. Some of these capabilities have already surfaced in the recently debuted Gemini Agent, which utilizes reasoning models informed by the Mariner research to interact more fluidly with digital environments.

The internal realignment at Google comes at a pivotal moment for the artificial intelligence industry. Major labs and technology conglomerates are currently racing to respond to the rapid emergence of high-performance agents such as OpenClaw and Claude Code. These tools, which have gained significant traction among the developer community, represent a departure from the browser-centric automation that dominated the industry’s imagination only a year ago. The shift is so pronounced that Nvidia CEO Jensen Huang recently characterized the rise of agentic systems as the dawn of a new operating system for computers. During a developer conference earlier this week, Huang noted that every global enterprise must now develop an "OpenClaw strategy" to remain competitive, framing these agents as the next evolution of corporate productivity.

The Rise and Stagnation of Browser-Based Agents

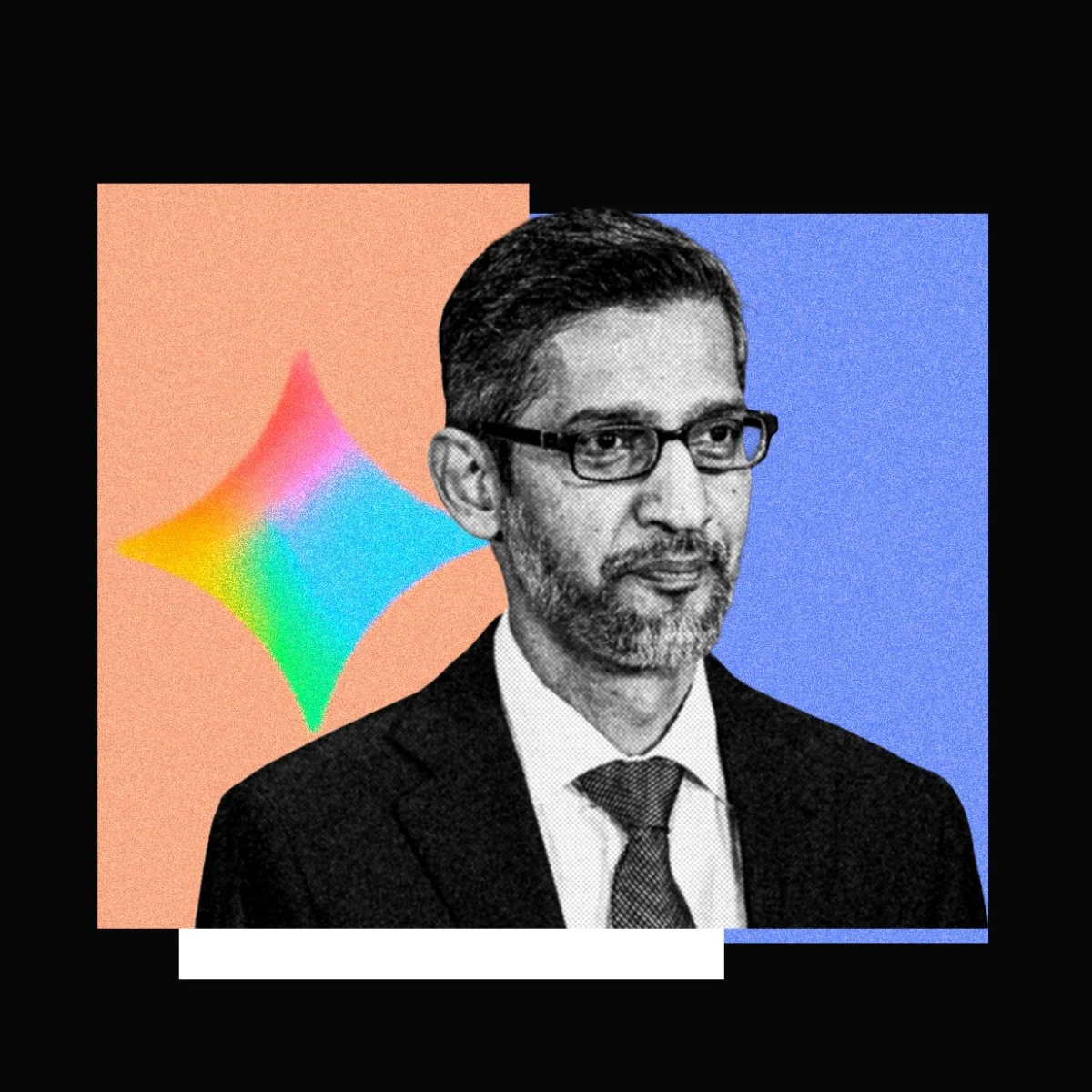

To understand the current pivot, it is necessary to examine the trajectory of browser agents over the past eighteen months. At the 2024 Google I/O conference, CEO Sundar Pichai positioned Project Mariner as a centerpiece of the company’s vision for a helpful AI. At the time, the industry was captivated by the prospect of "web agents"—AI systems that could look at a browser window, identify buttons, scroll through lists, and fill out forms exactly like a human user. This approach was seen as the "holy grail" of consumer AI because it required no specialized APIs; if a human could do it on a website, the AI could do it too.

Following Google’s lead, other major players like OpenAI and Perplexity launched their own consumer-facing browser agents. These products promised to automate mundane tasks such as booking flights, ordering groceries, or conducting deep-dive research across multiple tabs. However, despite the initial excitement, market data suggests that consumer adoption has significantly lagged behind industry expectations. Perplexity’s "Comet" browser agent, for instance, reported approximately 2.8 million weekly active users in December 2025. While seemingly substantial, this figure pales in comparison to the broader user bases of standard AI search tools. Even more telling are the figures for OpenAI’s ChatGPT Agent, which reportedly saw its weekly active user base dip below the one-million mark in recent months. When contrasted with the hundreds of millions of users who engage with the standard ChatGPT interface for text generation and coding, browser agent usage appears to be little more than a niche interest.

Technical Friction and the Efficiency Gap

The primary hurdle for browser-based agents like Project Mariner has been the inherent inefficiency of graphical user interface (GUI) navigation. Most current browser agents operate by taking frequent screenshots of the user’s screen, processing those images through a multimodal large language model (LLM), and then calculating the coordinates for the next click or keystroke. This process is computationally expensive and introduces significant latency. Furthermore, visual interpretation is prone to errors; a slight change in a website’s layout or a pop-up advertisement can easily confuse an agent relying on visual cues.

Kian Katanforoosh, CEO of the AI upskilling platform Workera and a lecturer at Stanford University, suggests that the industry is realizing that the GUI is not the most effective medium for AI communication. According to Katanforoosh, the recent success of agents like Claude Code and OpenClaw stems from their reliance on the command-line interface (CLI) or terminal. Because LLMs are natively text-based, interacting with a text-based terminal is fundamentally more efficient than interpreting visual pixels. Katanforoosh estimates that terminal-based agents can achieve the same outcomes as browser agents with 10 to 100 times fewer steps, as they can interact directly with file systems and system processes without the overhead of rendering and "seeing" a webpage.

Evolutionary Branches in Computer Use Technology

Despite the challenges facing browser-specific agents, research into "computer use" remains a high-stakes frontier. The industry is currently split between improving visual-based agents and perfecting text-based coding agents. On the visual front, startups are attempting to solve the latency problem through innovative architectural changes. For example, Standard Intelligence recently released a model trained on video data rather than static screenshots. By utilizing a proprietary video encoder, the startup claims it can compress visual information into an AI model’s context window 50 times more efficiently than previous methods. In a high-profile demonstration, the company showed its model navigating a computer keyboard and even driving a vehicle autonomously through San Francisco, suggesting that visual-based AI still has a future in environments where text-based APIs are unavailable.

However, the prevailing trend among major AI labs is a decisive move toward coding agents. These systems, such as Anthropic’s Claude Cowork or Perplexity’s "Personal Computer" tool, leverage the agent’s ability to write and execute code to solve non-coding problems. If a user needs to organize a budget, rather than having an agent "click" through an Excel spreadsheet, a coding agent can simply write a script to parse data and generate a custom dashboard. This "software-defined" approach to task completion is proving to be far more reliable and versatile than the "human-mimicry" approach of browser agents.

Strategic Implications and the 80/20 Rule

The shift in Google’s Project Mariner team reflects a broader strategic realization: the most valuable AI agents may not be the ones that act like humans, but the ones that act like sophisticated software engineers. By folding Mariner’s research into the Gemini ecosystem, Google is likely looking to create a hybrid system that can handle both the "back-end" logic of coding and the "front-end" necessity of navigating legacy web interfaces.

Ang Li, CEO of the startup Simular and a former researcher at Google DeepMind, argues that while coding agents are the current favorites, GUI-based computer use will always be a necessary component of the AI stack. Li posits an "80/20 split," where 80 percent of tasks can be solved more efficiently through terminals and APIs, but the remaining 20 percent—such as interacting with healthcare portals, insurance websites, or legacy enterprise software—will always require a visual interface. For these "API-less" environments, the work done by the Project Mariner team remains essential infrastructure.

Looking Ahead: Trust and Adoption Hurdles

As Google and its competitors refine their agent strategies, the ultimate challenge remains user trust. Whether an agent uses a browser or a terminal, the stakes of "agentic" action are significantly higher than those of simple text generation. If a chatbot provides a wrong answer, the cost is a minor inconvenience; if an AI agent makes a mistake while booking a non-refundable flight or modifying a critical system file, the consequences are financial and operational.

Executive leadership at OpenAI has expressed a desire for their Codex models to eventually power general-purpose agents within ChatGPT, moving away from specialized browser tools toward a more integrated "do-it-all" assistant. Google’s integration of Mariner into Gemini suggests a similar path toward a unified agent experience. However, until these systems can demonstrate a near-perfect reliability rate, they are likely to remain tools for developers and power users rather than the general public. The transition of the Project Mariner team marks the end of the "browser agent" as a standalone hype cycle and the beginning of a more mature, integrated era of AI-driven computer automation. The focus has moved from making AI look like a person using a computer to making the computer natively intelligent.