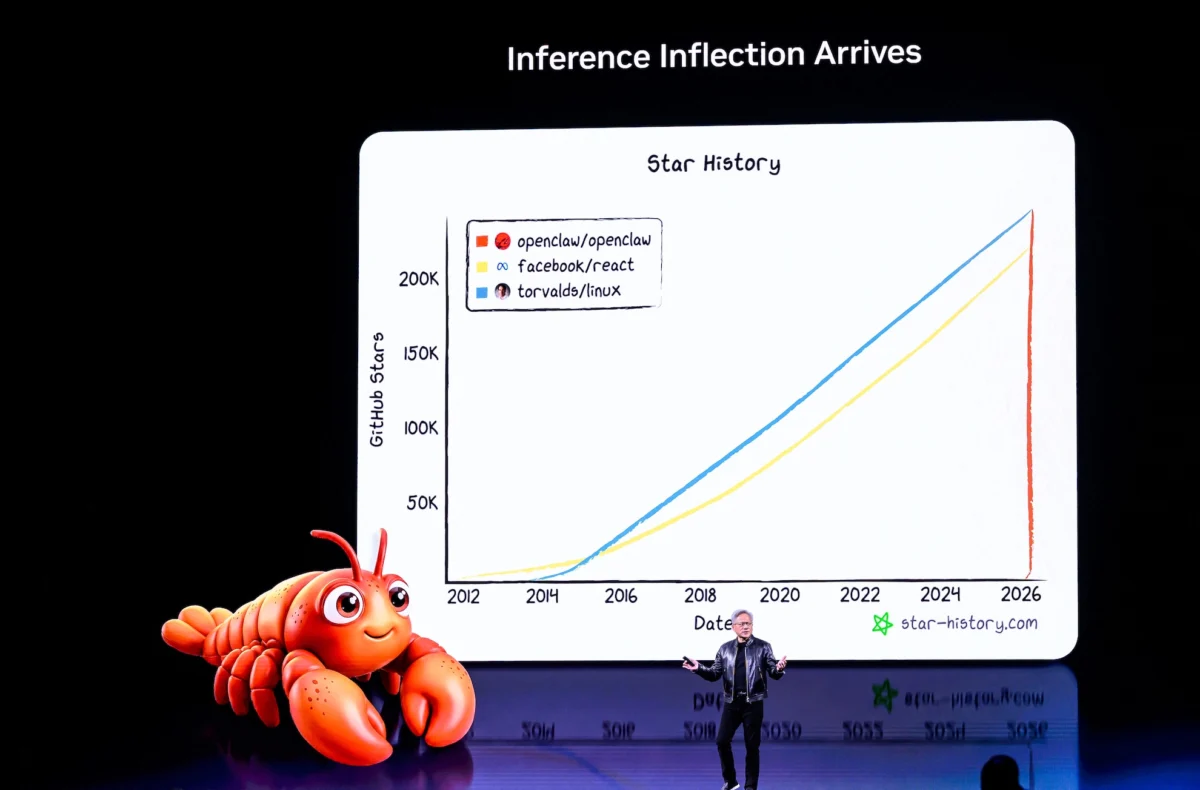

Nvidia CEO Jensen Huang, in his characteristic leather jacket, commanded the stage at the company’s GTC conference this week, delivering a two-and-a-half-hour keynote that served as a profound declaration of intent for the future of artificial intelligence. Huang projected an astounding $1 trillion in AI chip sales through 2027, positing a future where every company would require an "OpenClaw strategy," and punctuated the event with a memorable, if somewhat rambling, Olaf robot whose microphone ultimately had to be cut. The overarching message was unequivocal: Nvidia aspires to be the foundational technology provider for virtually every facet of modern existence, spanning from sophisticated AI training models to the intricacies of autonomous vehicles and even the immersive experiences found in Disney parks. This ambitious vision underscores Nvidia’s deepening strategic pivot from a graphics processing unit (GPU) manufacturer to a pivotal architect of the global AI infrastructure.

GTC 2026: A Landmark Event for Artificial Intelligence

The GPU Technology Conference (GTC), Nvidia’s premier annual event, has evolved from its origins as a niche gathering for graphics developers into one of the most significant forums for artificial intelligence, accelerated computing, and enterprise technology. GTC 2026, held amidst a fervent global embrace of AI technologies, marked a critical juncture for Nvidia, showcasing its latest innovations and articulating a strategic roadmap designed to cement its dominance. The conference traditionally serves as a platform for Nvidia to unveil new architectures, software platforms, and ecosystem advancements, attracting a diverse audience of developers, researchers, industry leaders, and investors. This year’s event was particularly charged, reflecting the explosive growth and transformative potential of generative AI and large language models (LLMs) that have reshaped the technological landscape over the preceding years. Nvidia, already holding an estimated 80-90% market share in the AI chip sector for data centers, arrived at GTC 2026 with immense momentum, its valuation soaring as demand for its specialized hardware skyrocketed. The anticipation surrounding Huang’s keynote was palpable, with industry observers eager to glean insights into how the company planned to capitalize on and further drive the AI revolution.

The Trillion-Dollar Prophecy: Dissecting Nvidia’s Ambitious Sales Targets

Jensen Huang’s projection of $1 trillion in AI chip sales through 2027 is a figure that reverberates with both audacity and a profound understanding of market dynamics. To contextualize this, the global AI chip market, encompassing various segments from data centers to edge computing, was estimated to be in the tens of billions of dollars annually in the mid-2020s. Huang’s forecast implies an exponential acceleration, signaling Nvidia’s belief in an unparalleled surge in demand for its specialized processors. This figure is not merely a sales target; it is a declaration of the anticipated scale of AI adoption across every conceivable industry. Analysts, while often cautious, have largely been bullish on Nvidia’s prospects, given its entrenched position and technological lead. Firms like Grand View Research and IDC have consistently revised their AI market growth forecasts upwards, with some projecting the global AI market to exceed $1.8 trillion by 2030, encompassing software, hardware, and services. Nvidia’s $1 trillion chip sales projection over a shorter three-year span (roughly $333 billion per year) suggests a significant portion of this growth will be driven by its hardware.

The Technological Backbone: Blackwell and Vera Rubin

Central to this trillion-dollar vision are Nvidia’s next-generation architectures, prominently featuring Blackwell and the Vera Rubin platforms. While specific, detailed technical specifications of these future architectures are often shrouded in competitive secrecy until closer to launch, the names themselves often hint at their intended impact. Blackwell, expected to succeed the highly successful Hopper architecture (which powered the H100 GPU), is anticipated to deliver unprecedented leaps in AI training and inference performance, potentially doubling or tripling the computational density and efficiency. This would be achieved through advancements in core GPU architecture, interconnect technologies like NVLink, and sophisticated packaging techniques. The Vera Rubin platform, often positioned as an even more advanced or specialized iteration, is expected to push boundaries further, perhaps focusing on extreme-scale AI models, quantum-inspired computing, or novel approaches to data processing and memory management. These platforms are not just about raw computational power; they are about holistic system design, integrating advanced CPUs (like Nvidia’s Grace CPU), high-bandwidth memory (HBM), and sophisticated software stacks (CUDA) to create a seamless, high-performance computing environment optimized for AI workloads. The sheer scale of these systems, often incorporating tens of thousands of GPUs in interconnected data centers, forms the backbone of the AI factories that Huang envisions.

The "OpenClaw Strategy": A New Paradigm for AI Security and Ecosystem Development

A particularly intriguing and potentially transformative aspect of Huang’s keynote was the declaration that every company needs an "OpenClaw strategy." The term "OpenClaw" itself is provocative, suggesting a duality of openness and proprietary control, or perhaps a robust, secure, and integrated ecosystem that is simultaneously accessible and tightly managed. While the full scope of this strategy was not exhaustively detailed, contextually, it can be interpreted as Nvidia’s approach to fostering a secure, scalable, and interconnected AI infrastructure.

Historically, the tech industry has grappled with the tension between open-source collaboration and proprietary control. Nvidia, with its CUDA platform, has successfully built a proprietary software ecosystem around its hardware, which has become a significant competitive moat. "OpenClaw" could signify several strategic thrusts:

- Secure AI Infrastructure: As AI systems become more pervasive and critical, security vulnerabilities pose immense risks. An "OpenClaw strategy" likely emphasizes end-to-end security measures, from hardware-level root-of-trust to secure software development practices and robust network protocols, ensuring the integrity and confidentiality of AI models and data. This would address growing concerns about data privacy, model tampering, and the potential for malicious AI applications.

- Ecosystem Integration and Control: While "open," the "claw" aspect suggests Nvidia’s continued efforts to integrate its hardware, software (CUDA, cuDNN), and services into a cohesive, optimized stack. This allows for seamless deployment and scaling of AI applications, but also reinforces developer reliance on Nvidia’s platform. It could involve standardized interfaces, APIs, and frameworks that, while open for developers to build upon, are deeply optimized for Nvidia’s underlying architecture.

- Hybrid Cloud and Edge AI: The strategy might also address the need for flexible AI deployment across various environments – from hyperscale data centers to enterprise private clouds and distributed edge devices. "OpenClaw" could refer to a set of technologies and standards that allow for consistent AI development and deployment across these heterogeneous environments, potentially leveraging technologies like Nvidia’s AI Enterprise software suite and federated learning capabilities.

- Addressing Supply Chain and Data Sovereignty: In an era of increasing geopolitical complexity and data localization requirements, an "OpenClaw" strategy could also imply a framework for building AI infrastructure that is resilient to supply chain disruptions and respects national data sovereignty. This might involve regional manufacturing partnerships, secure chip design, and software solutions that allow for localized AI operations without compromising global consistency.

The call for an "OpenClaw strategy" implicitly acknowledges the increasing complexity and criticality of AI infrastructure. It signals Nvidia’s intent not just to sell chips, but to provide a comprehensive, secure, and integrated platform that companies can trust for their most sensitive and strategic AI initiatives.

Nvidia’s Ubiquitous Vision: From AI Training to Disney Parks

Huang’s declaration that Nvidia wants to be "foundational to everything" is not hyperbole; it reflects the company’s aggressive expansion into diverse sectors, positioning its accelerated computing platform as the indispensable engine for modern innovation.

- AI Training and Inference: This remains Nvidia’s core strength. Its GPUs are the workhorses behind the training of the largest AI models, including LLMs, generative AI, and advanced computer vision systems. The demand for faster, more powerful chips for these tasks continues unabated as AI models grow in complexity and size.

- Autonomous Vehicles: Nvidia’s DRIVE platform is a leading solution for self-driving cars, powering everything from advanced driver-assistance systems (ADAS) to fully autonomous vehicles. This includes hardware, software, and a robust simulation environment (Nvidia Omniverse) for testing and validation. The company partners with numerous automotive manufacturers and robotaxi companies, aiming to be the "AI brain" of future mobility.

- Robotics: Beyond vehicles, Nvidia’s Jetson platform and Isaac robotics platform are enabling a new generation of intelligent robots for manufacturing, logistics, healthcare, and exploration. These platforms provide the computational power and AI capabilities for perception, navigation, manipulation, and human-robot interaction.

- Healthcare and Life Sciences: Nvidia is deeply involved in accelerating drug discovery, medical imaging, genomics, and digital pathology. Its Clara platform provides an AI-powered framework for healthcare, leveraging GPUs for faster analysis of massive datasets and more accurate diagnostic tools.

- Enterprise AI and Cloud Computing: Nvidia partners with all major cloud providers and enterprises to deploy AI infrastructure, offering specialized instances and services powered by its GPUs. This enables businesses to leverage AI for data analytics, customer service, fraud detection, and operational optimization.

- Digital Twins and Industrial Metaverse: The mention of Disney parks is particularly illustrative of Nvidia’s foray into the "industrial metaverse" and digital twins. Through its Omniverse platform, Nvidia enables the creation of highly realistic, physically accurate simulations of real-world environments, factories, cities, and even entertainment venues. For Disney, this could mean simulating park operations, designing new attractions, optimizing guest flow, or even creating hyper-realistic virtual experiences. This vision extends to manufacturing, architecture, engineering, and construction, where digital twins can optimize processes, predict outcomes, and reduce costs.

The brief, humorous interlude with the Olaf robot, which began to ramble and had its mic cut, served as a lighthearted reminder of the ongoing challenges and sometimes unpredictable nature of AI, even amidst such grand pronouncements. It humanized the massive technological undertaking and offered a moment of levity in an otherwise intensely focused technical presentation.

Market Reactions and Competitive Landscape

The market’s reaction to Nvidia’s GTC announcements was predictably robust, with investor confidence buoyed by the aggressive growth projections and the clarity of the company’s strategic vision. Nvidia’s stock often serves as a bellwether for the broader AI sector, and strong performance from the company tends to ripple across the technology market.

However, such dominance inevitably invites increased competition. Rivals like Advanced Micro Devices (AMD) are aggressively developing their own AI accelerators, such as the Instinct MI300X, aiming to capture a larger share of the lucrative data center market. Intel, through its Gaudi accelerators and strategic acquisitions, is also vying for a stronger position in the AI hardware space. Furthermore, major cloud providers like Google (with its Tensor Processing Units, TPUs), Amazon (Inferentia and Trainium chips), and Microsoft are increasingly designing their own custom AI silicon to optimize costs and performance within their hyperscale data centers, potentially reducing their reliance on external vendors like Nvidia for certain workloads.

The trillion-dollar projection, while a testament to Nvidia’s leadership, also signals a potential flashpoint for intense competition. The sheer scale of the market means there is room for multiple players, but Nvidia’s established ecosystem and continuous innovation present formidable barriers to entry. The company’s strategic move into full-stack solutions—combining hardware, software, and services—makes it a more formidable competitor, as it offers not just components, but complete, optimized AI platforms.

The Startup Conundrum: Navigating Nvidia’s Expanding Ecosystem

Nvidia’s growing web of AI infrastructure partnerships, while creating immense opportunities, also presents a complex landscape for startups. This was a key point of discussion for the TechCrunch Equity podcast team, highlighting the duality of this expansive ecosystem.

Opportunities for Startups:

- Access to Cutting-Edge Technology: Nvidia provides startups with unparalleled access to the most powerful AI computing resources. Through cloud partnerships, developer programs, and specialized SDKs, even small teams can leverage supercomputing-level capabilities to train and deploy sophisticated AI models. This democratizes access to high-end AI research and development.

- Ecosystem Support and Tools: Nvidia offers a rich ecosystem of software, libraries (CUDA, cuDNN, TensorRT), and frameworks that accelerate AI development. Startups can build on these established tools, reducing their time-to-market and allowing them to focus on their unique intellectual property rather than reinventing foundational AI infrastructure.

- Partnership and Visibility: Collaborating with Nvidia, whether through its Inception program for startups or direct technical partnerships, can provide crucial validation, visibility, and potential access to larger enterprise customers that Nvidia serves. This can be a significant boost for nascent companies looking to scale.

- Specialized Solutions: As Nvidia expands into niche areas like autonomous vehicles (DRIVE), robotics (Isaac), and healthcare (Clara), it creates new vertical markets for startups to build specialized applications and services on top of Nvidia’s platforms.

Challenges for Startups:

- High Costs: Accessing Nvidia’s top-tier hardware, especially the latest generation GPUs, can be extremely expensive. While cloud providers offer pay-as-you-go models, intense AI workloads can quickly accrue significant costs, posing a financial burden for bootstrapped or early-stage startups.

- Vendor Lock-in and Dependency: Deep integration with Nvidia’s CUDA ecosystem, while offering performance benefits, can lead to vendor lock-in. Migrating AI models and codebases to alternative hardware platforms (e.g., AMD GPUs, custom ASICs) can be a challenging and resource-intensive endeavor. This dependency raises concerns about pricing power and strategic flexibility for startups.

- Competition for Resources: As demand for Nvidia’s chips skyrockets, startups might face competition with larger enterprises and hyperscale cloud providers for access to the latest hardware, potentially leading to supply chain delays or higher pricing.

- Nvidia’s Own Vertical Integration: As Nvidia expands its offerings to include more software and platform services, it can increasingly compete with some of its ecosystem partners or even develop solutions that overlap with startup offerings. Navigating this dynamic requires careful strategic positioning.

For startups, the key lies in strategically leveraging Nvidia’s strengths while mitigating the risks of over-reliance. This involves focusing on unique value propositions, exploring multi-cloud or hybrid strategies, and carefully managing costs.

TechCrunch Equity Podcast: Deeper Insights into Nvidia’s AI Play

The TechCrunch Equity podcast consistently serves as a vital resource for navigating the complex and rapidly evolving world of technology and venture capital. On this particular episode, co-hosts Kirsten Korosec (transportation editor), Anthony Ha (weekend editor), and Sean O’Kane (reporter), alongside audio producer Theresa Loconsolo, provided an incisive breakdown of Nvidia’s GTC announcements. Their discussion went beyond the headlines, delving into the practical implications of Nvidia’s strategic moves, especially for the startup ecosystem.

Kirsten Korosec, with her extensive background in covering transportation, offered unique perspectives on how Nvidia’s autonomous vehicle ambitions would shape the future of mobility startups. Anthony Ha, leveraging his broad tech reporting experience, likely provided a holistic view of the market dynamics and investor sentiment. Sean O’Kane, known for his coverage of the transportation industry and consumer technology, contributed insights into the competitive landscape and technological shifts. Theresa Loconsolo ensured the discussion was expertly produced, delivering a clear and engaging audio experience.

The podcast’s focus on "what Nvidia’s growing web of AI infrastructure partnerships actually means for startups" is particularly pertinent. It speaks to the core challenges and opportunities faced by nascent companies in a market increasingly dominated by a few powerful players. By dissecting the intricacies of Nvidia’s "foundational to everything" strategy, the Equity team helped listeners understand how these grand visions translate into tangible impacts on innovation, investment, and market entry for new ventures. The podcast serves as an indispensable analytical tool, offering informed perspectives that help entrepreneurs, investors, and tech enthusiasts make sense of the momentous shifts occurring in the AI landscape. Listeners can access these critical discussions on major platforms including YouTube, Apple Podcasts, Overcast, and Spotify, and follow the podcast’s updates on X and Threads via @EquityPod.

Nvidia’s GTC 2026 keynote, led by Jensen Huang, was far more than a product launch; it was a comprehensive blueprint for the future of artificial intelligence. The audacious projection of $1 trillion in AI chip sales through 2027, coupled with the call for an "OpenClaw strategy" and the vision of Nvidia as the foundational layer for all industries, underscores the company’s ambition to be the central nervous system of the AI-powered world. While this trajectory promises unprecedented technological advancements and economic growth, it also brings forth critical questions regarding market competition, ecosystem dependencies, and the strategic positioning of countless startups striving to innovate within this rapidly expanding and increasingly centralized AI universe. The dialogue ignited by these announcements will undoubtedly shape the technological and economic landscape for years to come.