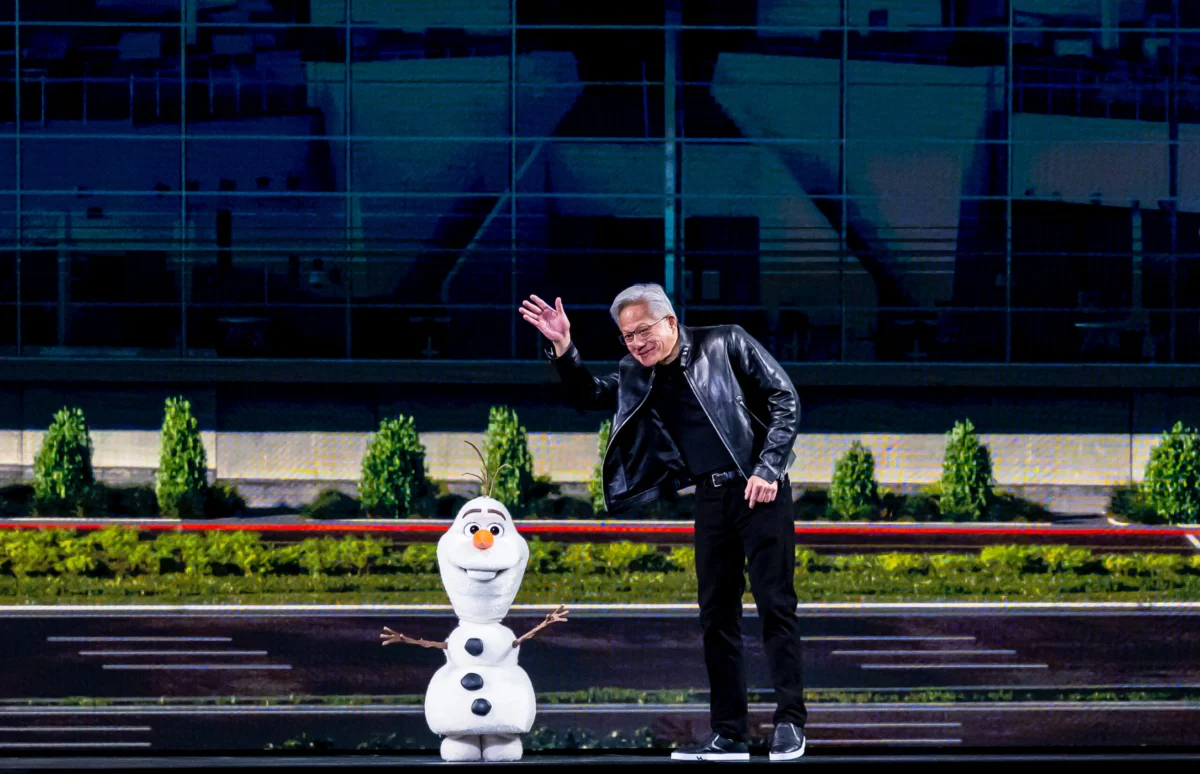

Jensen Huang, the charismatic CEO of Nvidia, commanded the stage this week at the company’s annual GTC conference, delivering a sprawling two-and-a-half-hour keynote address in his signature leather jacket. The central message was unequivocally clear: Nvidia intends to be the foundational technology for virtually every aspect of the burgeoning artificial intelligence revolution. Huang’s address was punctuated by a staggering projection of $1 trillion in AI chip sales through 2027, a declaration that every company must adopt an "OpenClaw strategy," and a memorable, if somewhat rambling, demonstration involving an Olaf robot whose microphone ultimately had to be cut. From sophisticated AI training models to the intricate systems powering autonomous vehicles and even the immersive experiences found at Disney parks, Nvidia’s ambition knows no bounds, positioning itself as the indispensable architect of the future.

The GTC conference, often dubbed the "AI Woodstock," serves as Nvidia’s premier platform to showcase its latest advancements and articulate its strategic vision to developers, researchers, and industry leaders worldwide. This year’s event underscored Nvidia’s increasingly critical role in the global technology landscape, particularly as the demand for high-performance computing to fuel generative AI models continues its exponential surge. Huang’s keynote was not merely a series of product announcements but a comprehensive blueprint for how Nvidia aims to embed its technology across diverse sectors, solidifying its market dominance in an era defined by intelligent machines.

Unpacking the Trillion-Dollar Projection

The projection of $1 trillion in AI chip sales by 2027 stands as a bold declaration, reflecting both Nvidia’s aggressive growth strategy and the profound market shift towards AI-centric computing. This figure far surpasses many conventional market forecasts and indicates Nvidia’s confidence in its next-generation hardware architectures, such as the Blackwell and Vera Rubin platforms, which are designed to power the most demanding AI workloads. To put this into perspective, the global semiconductor market was valued at approximately $573 billion in 2022, with AI chips representing a significant but still relatively smaller segment. Huang’s forecast suggests that Nvidia alone expects to capture a substantial portion of the accelerating AI hardware expenditure, driven by unprecedented demand from hyperscale cloud providers, enterprise data centers, and various specialized industries.

Industry analysts have largely reacted to this projection with a mixture of awe and cautious optimism. While ambitious, the underlying market dynamics lend credibility to Nvidia’s outlook. The rapid adoption of large language models (LLMs) and generative AI applications across virtually every industry vertical has created an insatiable appetite for the parallel processing capabilities that Nvidia’s Graphics Processing Units (GPUs) provide. Companies are increasingly investing in AI infrastructure to gain competitive advantages, automate processes, and innovate new products and services. This capital expenditure, often referred to as the "AI gold rush," is primarily directed towards the advanced silicon and interconnected systems that Nvidia specializes in. The company’s full-stack approach, encompassing hardware, software (CUDA platform), and networking solutions, creates a powerful ecosystem that makes it difficult for competitors to dislodge.

The ‘OpenClaw Strategy’: Nvidia’s Ubiquitous Ambition

Central to Huang’s keynote was the concept of an "OpenClaw strategy," a term that encapsulates Nvidia’s vision for deep, pervasive integration across all computational domains. While the specific details surrounding "OpenClaw" are still emerging, hints from related discussions suggest a focus on secure, open-standard architectures that allow Nvidia’s powerful AI engines to be deployed and managed seamlessly across diverse environments. The initial reference to "OpenClaw" solving Nvidia’s "biggest problem – security" suggests a framework designed to ensure the integrity and robustness of AI deployments, a critical concern as AI systems become more autonomous and interconnected.

This strategy extends beyond merely selling chips; it’s about building a foundational platform that developers and enterprises can rely on for every stage of the AI lifecycle, from initial data processing and model training to inference and deployment at the edge. Huang explicitly highlighted applications ranging from AI training, where Nvidia’s GPUs are virtually ubiquitous, to autonomous vehicles, where the company’s Drive platform is a leading solution, and even to the entertainment sector, exemplified by its mention of Disney parks. For autonomous systems, Nvidia’s Omniverse platform, a real-time simulation and collaboration platform, plays a crucial role in developing and testing AI models in virtual environments before real-world deployment. In entertainment, AI is being used for everything from content creation to personalized user experiences and advanced robotics, areas where Nvidia seeks to provide the underlying computational power.

Nvidia’s Expanding Ecosystem and Partnerships

The original article touched upon Nvidia’s "growing web of AI infrastructure partnerships," and Huang’s keynote provided ample evidence of this strategic expansion. Nvidia is not just a chip vendor; it is increasingly a provider of end-to-end AI solutions, collaborating closely with cloud service providers, hardware manufacturers, and software developers. These partnerships are crucial for realizing the "OpenClaw strategy," as they enable Nvidia’s technology to permeate various layers of the computational stack.

For instance, Nvidia works extensively with major cloud providers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud, which host vast fleets of Nvidia GPUs to power their AI services. These collaborations extend to joint development efforts on new AI platforms and services. In the enterprise sector, Nvidia partners with server manufacturers and software companies to integrate its AI platforms into existing data center infrastructure, facilitating the adoption of AI by businesses of all sizes. The company’s CUDA software platform, a parallel computing platform and programming model, has become the de facto standard for GPU programming, creating a powerful moat around its hardware ecosystem. This robust ecosystem ensures that as AI innovation accelerates, developers and companies naturally gravitate towards Nvidia’s established and widely supported solutions.

Market Context and Competitive Landscape

Nvidia’s dominance in the AI chip market is undeniable, with an estimated market share exceeding 80% for AI accelerators in data centers. However, the competitive landscape is not static. Major players like Intel and AMD are aggressively investing in their own AI hardware solutions, including specialized AI accelerators and CPUs with integrated AI capabilities. Hyperscale cloud providers, recognizing their reliance on Nvidia, are also developing custom AI chips (e.g., Google’s TPUs, Amazon’s Trainium and Inferentia, Microsoft’s Maia).

Despite these efforts, Nvidia maintains a significant lead, primarily due to its early mover advantage, the strength of its CUDA software ecosystem, and its rapid innovation cycle. The company’s ability to consistently deliver more powerful and efficient GPUs, coupled with its comprehensive software stack, creates a high barrier to entry for competitors. The demand for AI compute is so vast, however, that there is likely room for multiple players, but Nvidia is clearly positioned as the primary beneficiary of the current boom. The competitive dynamic pushes Nvidia to innovate continuously, ensuring its hardware and software remain at the forefront of AI research and deployment.

Implications for Startups and the Broader Industry

The TechCrunch Equity podcast discussion highlighted the implications of Nvidia’s growing influence for startups. For many AI startups, Nvidia’s ecosystem presents both immense opportunities and significant challenges. On one hand, Nvidia’s robust platforms, extensive developer tools, and widespread adoption provide a stable and powerful foundation upon which startups can build and deploy their AI solutions. Access to cutting-edge GPUs and the CUDA ecosystem accelerates development and allows startups to focus on their core AI algorithms and applications.

On the other hand, Nvidia’s dominance can lead to high costs for hardware and potential vendor lock-in. The specialized nature of AI chips means that startups often have limited alternatives, particularly for state-of-the-art model training. This can create a significant financial burden, especially for early-stage companies. The podcast hosts, Kirsten Korosec, Anthony Ha, and Sean O’Kane, likely delved into how startups navigate this environment – whether by seeking partnerships, optimizing their AI models for efficiency, or exploring alternative hardware solutions when feasible. The broader industry also faces questions about concentration of power, supply chain resilience, and the potential for a single company to dictate the pace and direction of AI innovation.

The AI Gold Rush: Demand and Supply Dynamics

The current era is often described as an "AI gold rush," with companies worldwide scrambling to acquire the computational resources necessary to develop and deploy AI. This unprecedented demand has created supply chain pressures, with advanced chip manufacturing becoming a critical bottleneck. Nvidia’s ability to navigate these challenges, secure manufacturing capacity, and deliver its cutting-edge products is paramount to its projected growth. The geopolitical implications of chip manufacturing, particularly in regions like Taiwan, add another layer of complexity to the supply chain dynamics.

Huang’s keynote at GTC was not just about the technical prowess of Nvidia’s chips; it was about demonstrating the company’s strategic foresight in anticipating and meeting this global demand. By projecting $1 trillion in sales, Nvidia is signaling its intent to be the primary enabler of this gold rush, providing the shovels, picks, and infrastructure for the prospectors of AI. The company’s investments in research and development, its expansion into new markets, and its relentless pursuit of technological breakthroughs are all geared towards sustaining this leadership position.

Beyond the Keynote: A Glimpse into the Future

The overall message from GTC 202X was a powerful affirmation of Nvidia’s long-term strategy: to be the ubiquitous computing platform for the age of AI. Huang’s vision extends beyond mere hardware sales; it’s about fostering an entire ecosystem that drives innovation, from fundamental AI research to the deployment of intelligent applications in every facet of life. The humorous, albeit glitchy, Olaf robot demonstration served as a reminder that while the future is incredibly promising, it is also still being built, with experimentation and iterative development at its core.

The GTC conference traditionally attracts tens of thousands of attendees, both virtually and in person, reflecting the broad interest and impact of Nvidia’s work. The discussions, workshops, and technical sessions held throughout the week typically delve deeper into the specifics of the announced platforms, showcasing real-world applications and fostering collaboration among the developer community. This collective engagement is vital for accelerating the adoption and refinement of Nvidia’s technologies, further solidifying its foundational role.

Analyst Reactions and Market Outlook

Following Huang’s bold predictions, financial analysts have been recalibrating their models for Nvidia. While the $1 trillion figure is ambitious, many acknowledge Nvidia’s strong execution track record and the robust market trends supporting AI growth. Major investment banks are likely to revise their price targets and revenue forecasts, reflecting the company’s enhanced outlook. The stock market’s initial reaction often provides a snapshot of investor sentiment, with positive movements typically indicating confidence in the company’s strategic direction and growth prospects. Technology analysts, meanwhile, are focusing on the technical details of the Blackwell and Vera Rubin architectures, assessing their performance capabilities and competitive advantages against rivals. The consensus appears to be that Nvidia is not merely riding the AI wave but actively shaping it, establishing standards and platforms that will influence the industry for years to come.

The Road Ahead for Nvidia

As Nvidia moves towards its ambitious 2027 goal, the company faces continued challenges, including managing complex global supply chains, fending off increasing competition, and adapting to the rapidly evolving landscape of AI research. However, with its strong market position, innovative product pipeline, and comprehensive ecosystem, Nvidia appears well-equipped to capitalize on the transformative potential of artificial intelligence. Jensen Huang’s keynote at GTC was not just an announcement of new products; it was a powerful statement of intent, signaling Nvidia’s unwavering commitment to being the central nervous system of the AI-powered future. The discussions and analysis sparked by the keynote, such as those on the TechCrunch Equity podcast, will continue to unpack the profound implications of Nvidia’s strategy for startups, established enterprises, and the entire global economy.